Operational Validation consists in writing Pester code not to test the script you wrote, but to test that the modifications or implementations that your scripts does, actually answers your original business need. Basically, if you create a firewall rule to open a specific port, with the sole purpose of being able to access a webserver and read data from a database, you want to be sure that you can actually interact with that web server, and query the needed data. If you have successfully set the firewall rule, and open the good ports, but if another firewall sits in between your client server and your webserver, or if the network cable is simply unplugged, you would fail in providing the service you intended to, even though you successfully implemented that firewall rule.

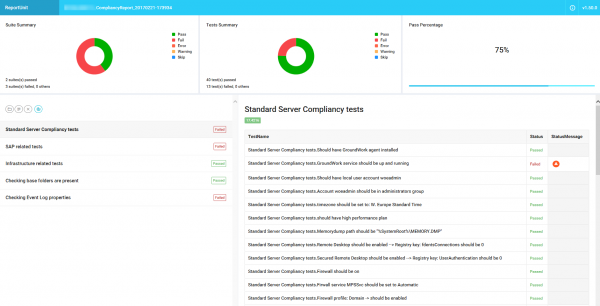

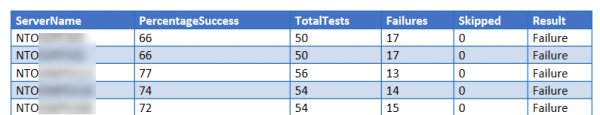

At Windows Operations & engineering we use Operational Validation to ensure that our servers are compliant with our standards (security, configuration etc…). Like this, we can guarantee that the server we provide, is consistent with our standards. The same tests are used in a later step, to validate that no configuration drift has occurred.

The tests consist of a number of pester scripts that are deployed to one or more servers. A script will execute the pester tests and collect the data in a standard XML format (NunitXML). This standard XML allows us to build automation around the results of our tests, and generate really cool reports out of that!

I have written a PowerShell Class that we use to generate either an individual report per server, or a global report that can be generated in different formats (docx, html etc..).

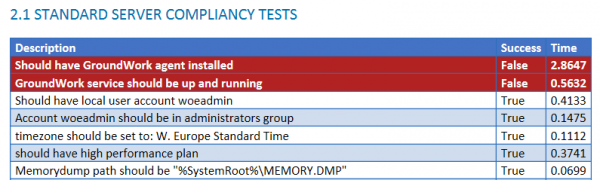

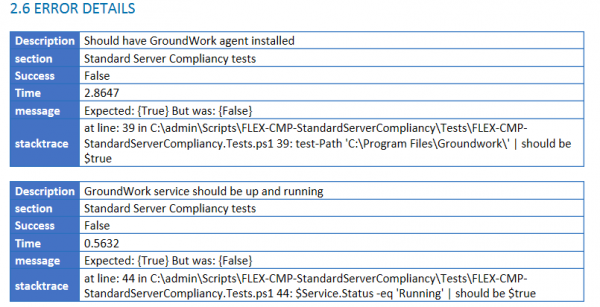

The individual report is based on an open source project called “ReportUnit”, and you can see an example below.